MochiWeb vs. Cowboy, HTTP vs. WebSocket benchmark

Posted on by Idorobots

Lately I've been banging around a lot in order to get up to speed with Erlang/OTP and its various web-server libraries, and I figured I could share some of my findings here for future reference and in general for The Greater Good.

Test server

As you might have already noticed, this benchmark concerns two of the most well knows Erlang web-server libraries, MochiWeb and Cowboy, and it aims to explore their behaviour under some considerable load.

The server in question uses two communication protocols, HTTP and WebSocket, so as an added benefit we'll see how these two compare. Unfortunately, MochiWeb doesn't have a built-in support for WebSocket, so an external library was needed. The rather simple client API is defined as:

- http://host/poll/N - an HTTP chunked reply with one chunk of data sent every N seconds,

- ws://host/wspoll/N - a WebSocket connection with one chunk sent every N seconds.

Setup

The testing side consists of a load simulator, that spawns and maintains a bajilion simultaneous connections restarting them if need be, and a monitor that collects vital server data. Naturally, to minimize simulators impact on the servers performance it was running on a separate machine. The overall setup looked like this:

For either back-end and communication protocol (so four runs in total) the simulator was ordered to incrementally spawn 1000 additional connections every several minutes, each of which issued a /(ws)poll/1/ request and waited for the reply chunks restarting in case of a failure.

On the other side, the monitor focused on collecting Erlang VMs data, such as total memory usage, SMP utilization or IO operations, over 5 minute periods excluding the "boot-up time" of each new batch of connections and the "dump time" of dumping the benchmark data into a file.

Each test was continued until 10000 simultaneous connections have been reached, mainly because I don't hate my machines THAT much... The most interesting results are plotted below with some subjective commentary following...

Results

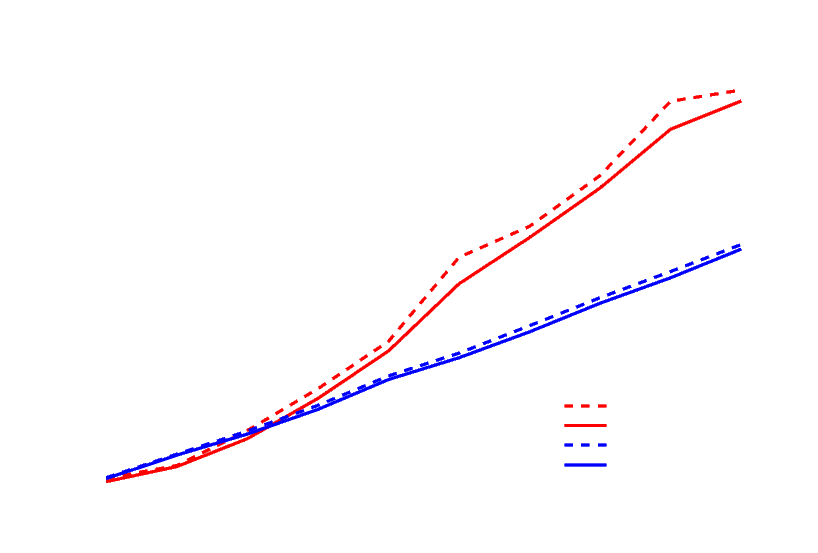

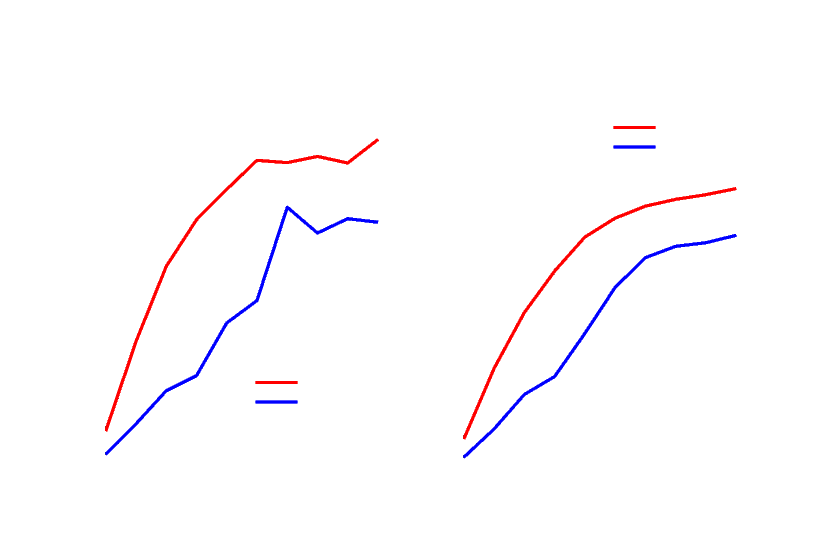

Total memory usage registered for the Cowboy back-end as a function of the number of simultaneous connections (I also included the maximal values for each data set as this might give you an idea of the GC) behaviour):

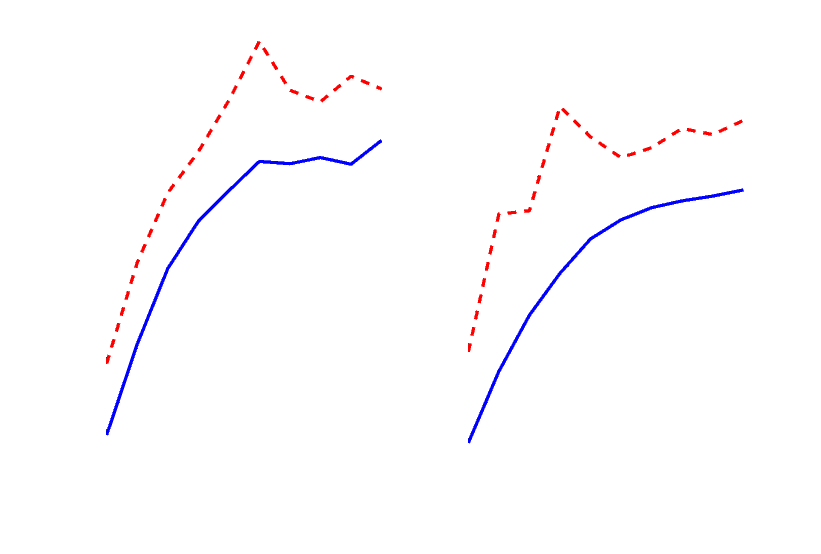

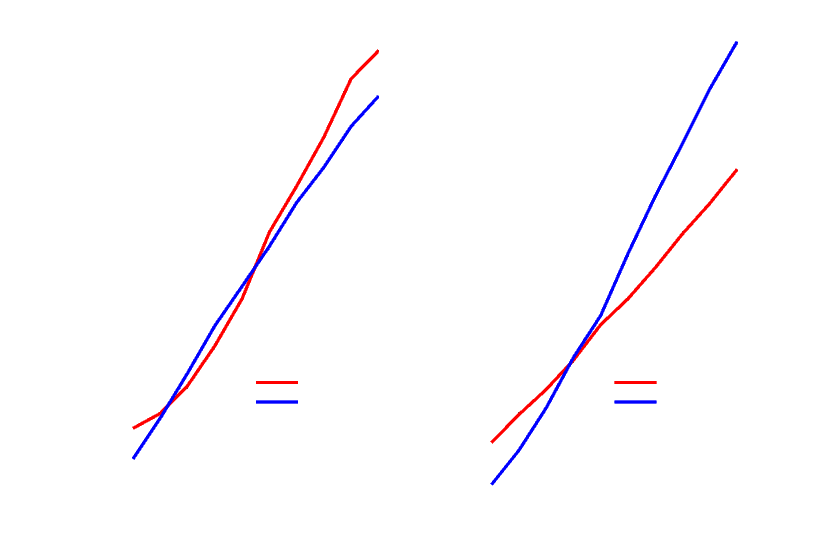

Average (blue line) and maximal (red, dashed line) SMP utilization of the Cowboy back-end (corresponds directly to the CPU utilization of the underlying machine):

Total memory usage of the MochiWeb back-end as a function of the number of simultaneous connections:

Average (blue) and maximal (red) SMP utilization of the MochiWeb back-end:

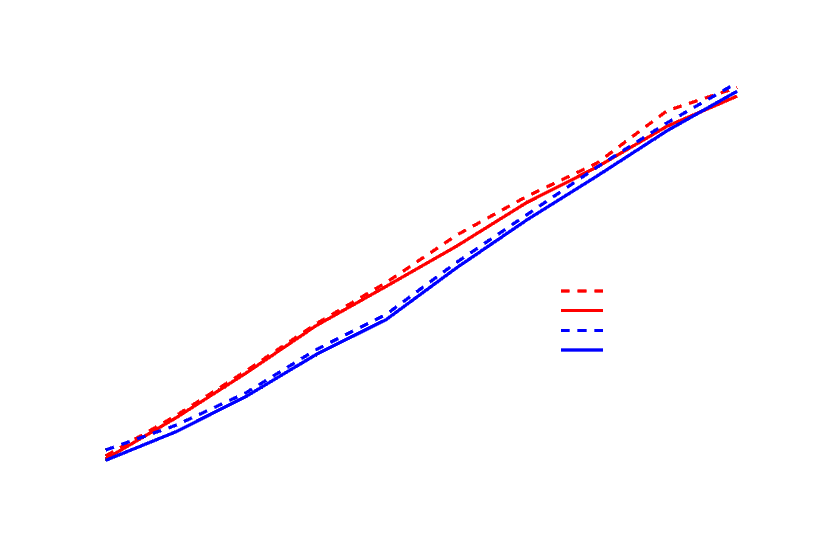

Total memory usage comparison of the back-ends:

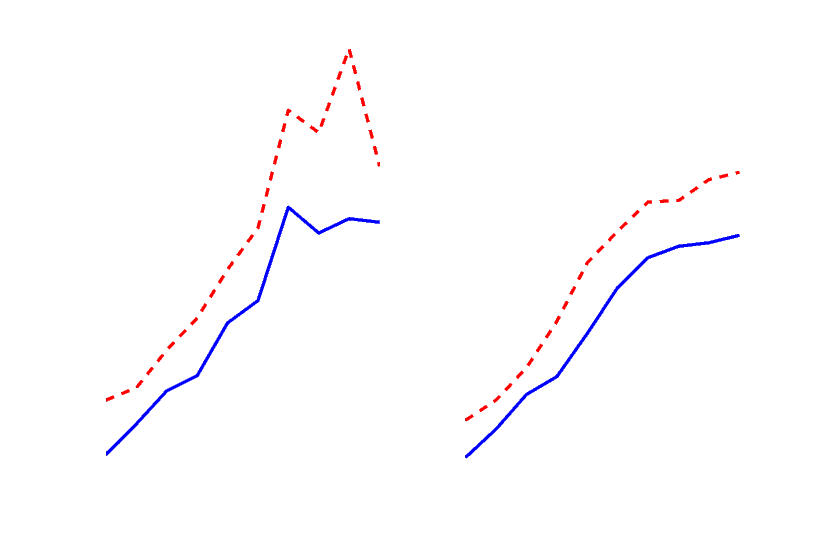

Average SMP utilization comparison of the back-ends:

Conclusion

It's easy to see that the Cowboy back-end is a bit more CPU-heavy. This is partially due to the fact that the test-server implementation hibernates heavily in order to preserve memory.Another interesting thing is the huge memory advantage of Cowboy/WebSocket over MochiWeb, 25% difference for ten thousand connections is a pretty big deal.

One odd behaviour of the Cowboy/HTTP back-end is the way it scales its memory usage with the number of connections, I expected something similar to MochiWeb/HTTP, that is linear relation, but it turns out to be a bit more complex.

One curious thing about both of the back-ends (or possibly about my network), which I did not include above, was the error rate which I estimate at 1-4%. It was hard to measure, as the load simulator auto-magically restarts broken connections, but it was definitely a thing and I might want to investigate this some more in the future.

I did not include the context switches or IO data in this posts either, as these turned out to be, unsurprisingly (or perhaps surprisingly, I can't really decide), pretty similar for both back-ends and protocols.

Lastly, I'd love to repeat this benchmark on even bigger number of connections, as I have a feeling that that's where Cowboy really excels, but I need to beef up my hardware first...

2013-09-05: Updated with some GitHub links.

2016-02-05: Adjusted some links.